Latency vs. Throughput and Video Bandwidth: What Broadcasters Need to Know

Low latency streaming is a topic with a lot of buzz in the live streaming world. Many broadcasters aim for the lowest latency possible to give their viewers a more lifelike experience.

There is a lot that goes on behind the scenes that determines how much latency a live stream will have. This is related to throughput, bandwidth, and other technically complex aspects.

In this post, we’re going to break down the technical topics of latency, throughput, and bandwidth. We’re going to discuss what each of these means and how they are related to one another. We’ll also discuss how these topics tie into bandwidth consumption.

Table of Contents

- Online Video Streaming: A Technical Overview

- What is Latency?

- What is Throughput?

- What is Bandwidth?

- Latency vs. Throughput vs. Bandwidth

- Calculating Bandwidth

- Final Thoughts

Online Video Streaming: A Technical Overview

Understanding online video streaming from a technical standpoint makes it much easier to digest complex concepts like latency, throughput, and bandwidth.

Online video streaming is made possible by a series of technology that carries data from one spot to another. This technology involves a combination of both hardware and software.

The path that the video follows typically looks like this:

Camera → Encoder → Online Video Platform → CDN Servers → Video Player

Video files are made of many pixels condensed by codecs and carried from one “stop” to the next by streaming protocols.

Currently, the most popular commonly used protocols for streaming are RTMP for ingest to the online video platform and HLS for delivery to an HTML5 video player. This combination yields low latency, reliable security, and nearly universal compatibility.

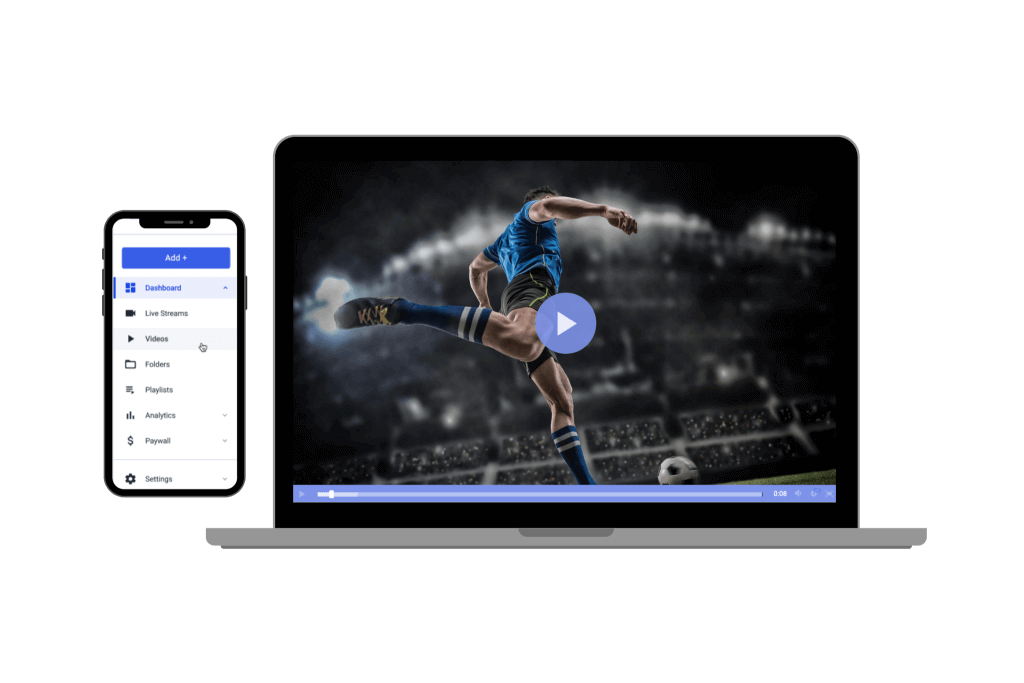

With the support of a powerful online video player, this entire process is made seamless. The complex exchanges and transmissions all take place behind the scenes, and broadcasters can call the shots with clear commands on a graphical user interface.

What is Latency?

Network latency is the time between a request is made and the request is complete. In broadcasting, latency is the delay from the time a video frame is recorded until the time that it reaches your viewers’ screens. Typically, latency is measured in seconds.

Ultra-low latency and real-time latency are valuable for peer-to-peer streaming or events that require participation from the audience.

While low latency streaming is often desired, it is not the best option for every situation. Some live broadcasts may benefit from a minute or so of latency to prevent any embarrassing mistakes.

Large events that are being broadcast to large national or international audiences benefit from having some latency since it gives producers time to censor out anything that isn’t appropriate for your audience. Think of Janet Jackson’s notorious “Wardrobe Malfunction,” for example.

A latency delay also comes in handy during interview broadcasts where the subject may use foul or inappropriate language.

What Affects Latency?

Several things affect latency. Some of these things include encoder settings, streaming protocols, and internet speed.

Ultimately, the latency of each individual stream comes down to the unique live streaming setup. For example, if you’re using a protocol that supports low latency streaming but your internet isn’t up to par, your latency will be higher.

One of the most important “behind the scenes” elements that affect latency is throughput.

What is Throughput?

Throughput is the amount of data that is transferred in a specific amount of time. In terms of video, it can be tracked by how many packets of data can be transmitted over a specific period.

It is also safe to say that throughput marks the average rate of successful data transmission in a streaming setup.

What Affects Throughput?

Like latency, throughput is affected by different components in your live streaming setups, such as internet speed, protocols, and encoder setup.

What is Bandwidth?

Bandwidth is the amount of data that is transferred. In streaming, you could measure this by the amount of data transferred for one video, and the units you’d use would usually be megabytes or gigabytes.

For broadcasters, the importance of bandwidth has mostly to do with how much you have access to and how much it costs to access more.

What Affects Bandwidth?

The amount of bandwidth that is required to transmit your video over the internet depends on the quality and length of your video.

Longer and higher resolution videos have more pixels to transmit, so naturally, these consume more bandwidth. It’s like carrying 1000 marbles vs. 1,000,000 marbles. It takes more to carry the latter.

Latency vs. Throughput vs. Bandwidth

Latency, throughput, and bandwidth are all different concepts but they all go hand-in-hand. Changes in any of the three affect the others.

Think of a tunnel with cars traveling through. Bandwidth is the size of the tunnel, throughput measures how many cars are traveling through, and latency is the amount of time it takes for the cars to get through.

To put this in the context of video streaming, your bandwidth is how much data you are transmitting, your throughput is how much can be transmitted at a time, and your latency is how fast it takes to get the data to the user-facing video players.

With this in mind, let’s take a look at the individual relationships between each of these three components.

Latency and Throughput

There is a direct correlation between latency and throughput. They affect one another in the streaming process. Lower latency goes with faster throughput, and higher latency goes with slower throughput.

Both of these measures depend on different parts of the streaming setup, so how each of them operates depends on your encoder settings, your network speeds, and the protocols that your setup uses.

Latency and Bandwidth

Latency and bandwidth also go hand-in-hand, but the relationship between the two is a bit more complex.

Bandwidth is how much data is transmitted and latency is how fast that data is transmitted. Higher bandwidth will yield lower latency, and lower bandwidth will yield higher latency.

Bandwidth and Throughput

The definition of bandwidth and throughput may seem almost identical, but bandwidth refers more so to the total volume of data that is being transferred, and throughput is measuring how much data can be transferred in a specific window of time.

Both have to do with speed, but throughput measurements track speed per time.

Another way to look at bandwidth vs. throughput is this: bandwidth is the level at which the system should operate whereas throughput is how it actually operates in action.

Calculating Bandwidth

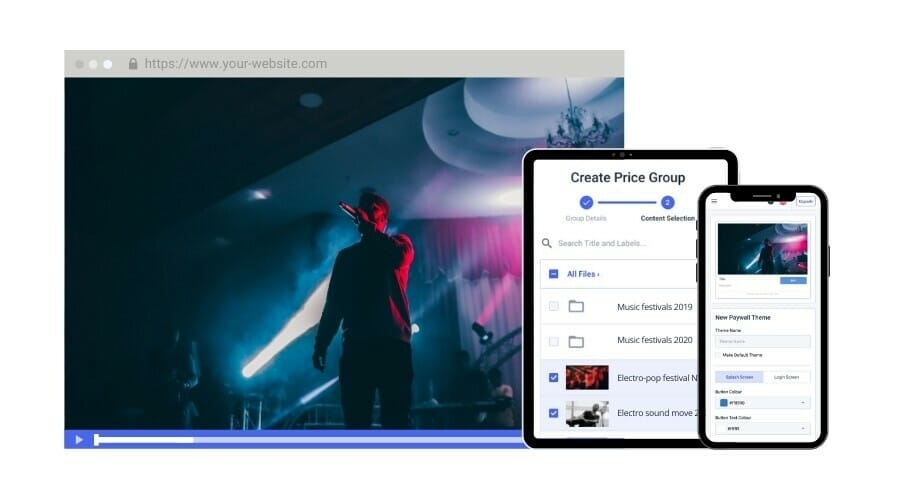

Bandwidth is important in live streaming because how much you use will affect the cost of broadcasting. If you are streaming with the support of a professional online video platform, you’ll either have an allotted amount of bandwidth on your plan or you’ll be able to pay as you go.

Of course, you don’t want to pinch pennies and skimp on bandwidth usage since it will affect your viewers’ experience, but you do want to be mindful of how much your operation will cost.

Bandwidth usage depends on several different things, such as the quality of your streams, how long your stream is, and other similar factors. A high-definition stream is going to consume more bandwidth than a low-definition or standard-definition stream.

Head over to the Dacast pricing page and crunch some numbers in our bandwidth calculator to better understand how much bandwidth you’ll need per month and how much this will cost you.

You’ll fill in the information relating to your usage, including how often you will stream, how many people you expect to tune in, how long they will watch your content, how much storage you’ll need, and your average video bitrate.

Calculating Bitrate

As we mentioned, you’ll need to know your average bitrate to get a more accurate bandwidth requirement calculation. Higher bitrate settings yield higher resolution videos. When the viewer is connected to high-speed internet, higher bitrate settings will also yield higher quality video.

Here is a breakdown of suggested bitrate and resolution settings for ultra-low definition, low definition, standard definition, high definition, and full high-definition streaming.

| ULD | LD | SD | HD | FHD | |

| Name | Ultra-Low Definition | Low Definition | Standard Definition | High Definition | Full High Definition |

| Video Bitrate (kbps) | 350 | 350 – 800 | 800 – 1200 | 1200 – 1900 | 1900 – 4500 |

| Resolution Width (px) | 426 | 640 | 854 | 1280 | 1920 |

| Resolution Height (px) | 240 | 360 | 480 | 720 | 1080 |

| H.264 Profile | Main | Main | High | High | High |

As we mentioned, a higher bitrate only yields a higher quality viewing experience when the viewers have an adequate internet connection.

It is unreasonable to expect every viewer to have identical internet connections, so many broadcasters use multi-bitrate streaming with an adaptive bitrate video player to produce the best results for the largest number of people.

Multi-bitrate streaming broadcasts a stream in multiple renditions with multiple resolutions. The adaptive bitrate video player requests the appropriate rendition based on each viewer’s specific internet spee d.

Lower bitrate renditions of the stream will consume less bandwidth than higher bitrate renditions.

Final Thoughts

Latency is an important factor in live streaming since it affects your viewers’ experience. Most reliable online video platforms will keep most of the technical aspects of latency, bandwidth, and throughput behind the scenes, but it is important to have a basic understanding of these concepts.

Having some basic understanding is particularly important since these things affect both your streaming costs and the user experience.

If you are looking for a live streaming solution that is capable of low latency streaming, look no further. Dacast may be the option for you.

In addition to low latency streaming, Dacast offers a wide variety of professional video streaming features. These include video monetization, secure streaming, access to a powerful video CMS, white-label streaming, brand customization, Expo video galleries, and more.

Interested in giving Dacast a try? Take advantage of our 14-day risk-free trial. All you have to do to get started is create a Dacast account. No binding contract or credit card is required.

For regular live streaming tips and exclusive offers, you can join the Dacast LinkedIn group.